Oh!! it’s been a while since I write a blog!

I’m sorry for that! Very busy at the moment!

In this post I will explain about a very cool robotics project I did last year. This project is as cool as it sounds: making a drone follow a roomba using computer vision!

The idea can be broken down in different sections, one the vehicle, the communications, the control, computer vision and results…

The objective of this project is to make a drone “see” a roomba and try to follow it from the air.

On the vehicle side, I was using the great and cool BigX, which is a great vehicle with very nice performance and big!! Here is a pic of it:

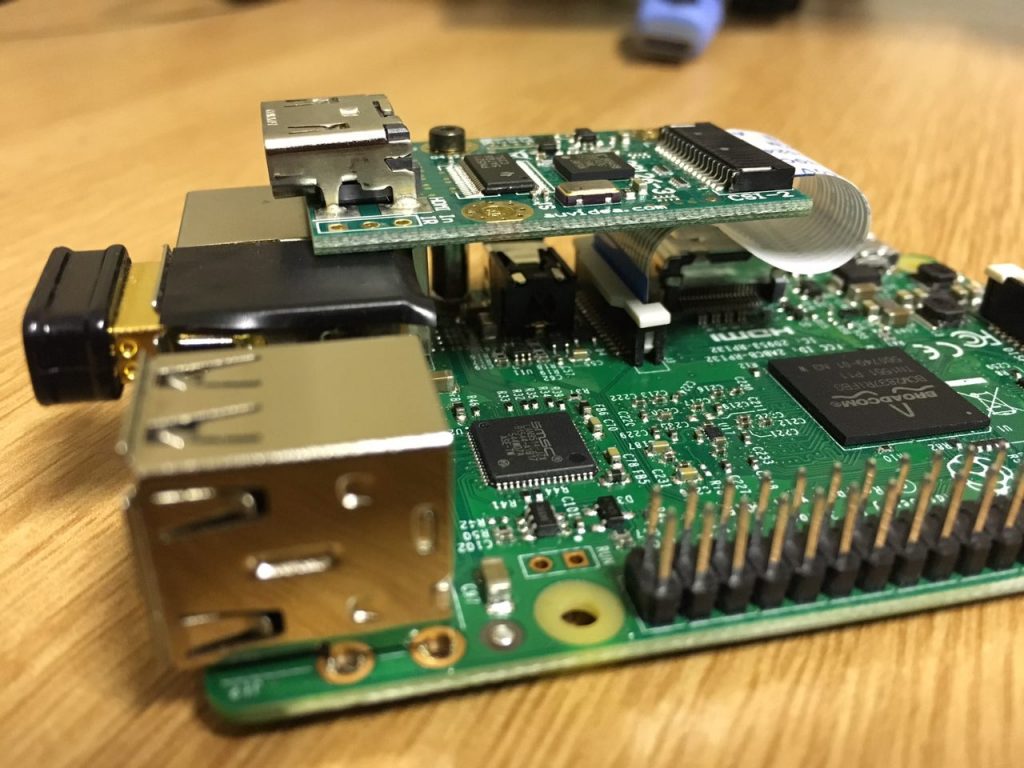

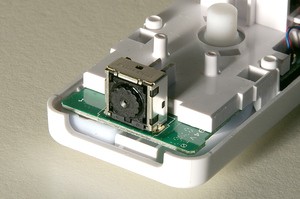

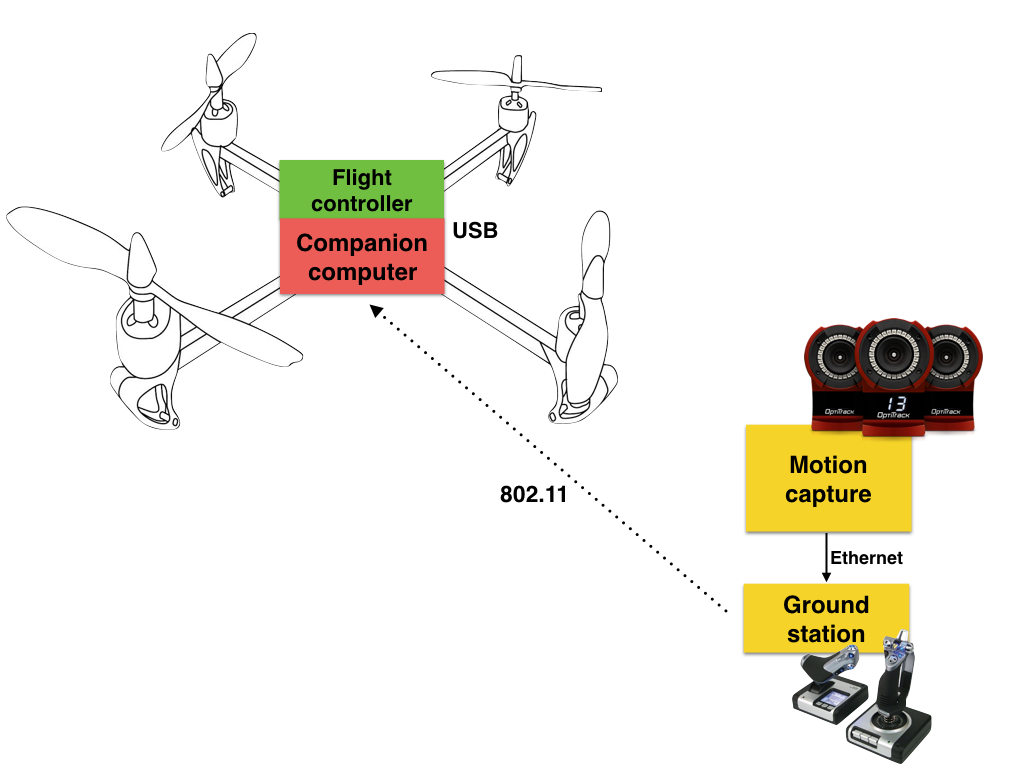

On board I was using the same concept of the Flight Stack, which is the combination of a flight controller (pixhack in this case) and a raspberry pi with extra features.

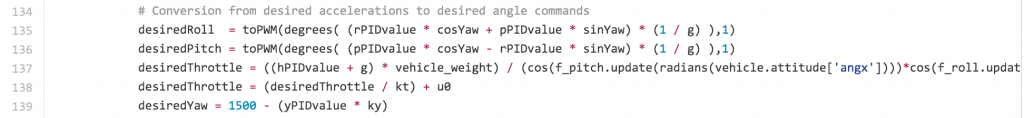

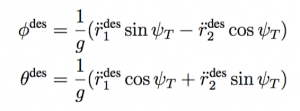

The RPI was a 3, and its the one in charge of “piloting” the aircraft when I’m not doing it. Also the RPI is the one that runs the computer vision algorithm to be able to “see” the roomba. With that information (position in pixels X and Y) the RPI will compute the necessary velocity commands to steer the vehicle to the center of the target.

The RPI was also in charge of the communications, it created a network and on the ground I was using a ubiquiti AP that was connected via ethernet to my MBPR, I used this configuration because such AP gave me the range of 10km LoS (I just try it up to 3km…)

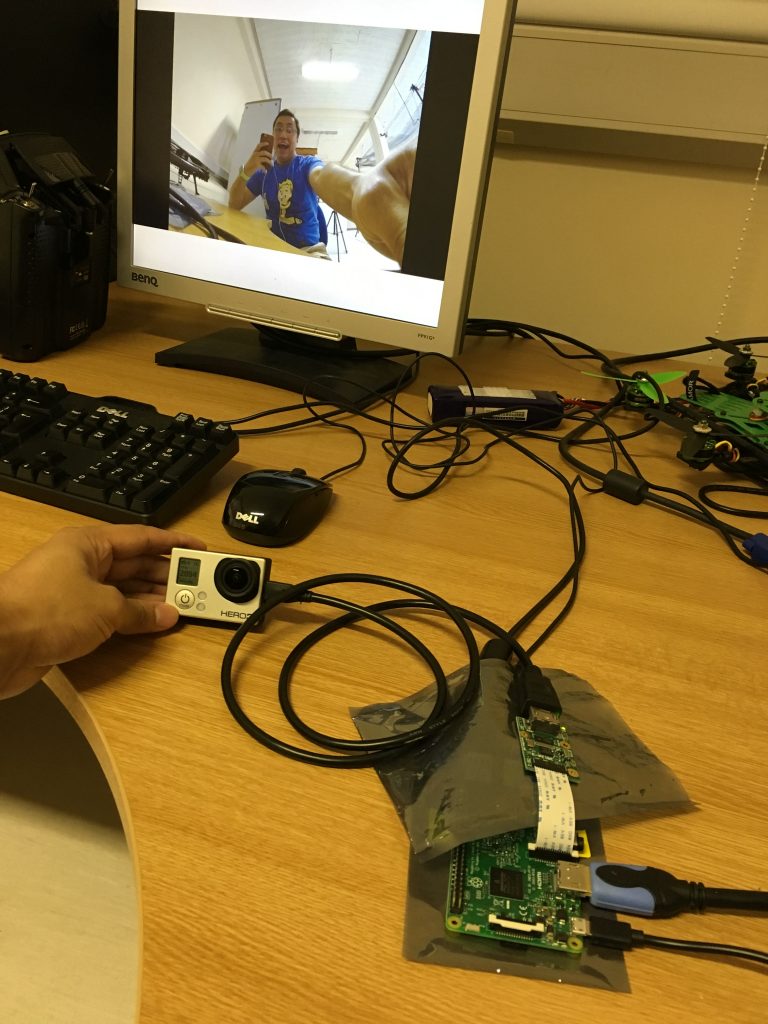

Also on board, connected to the RPI, a B101 HDMI bridge was used in order to capture the frames from the gopro camera and have them analyzed.

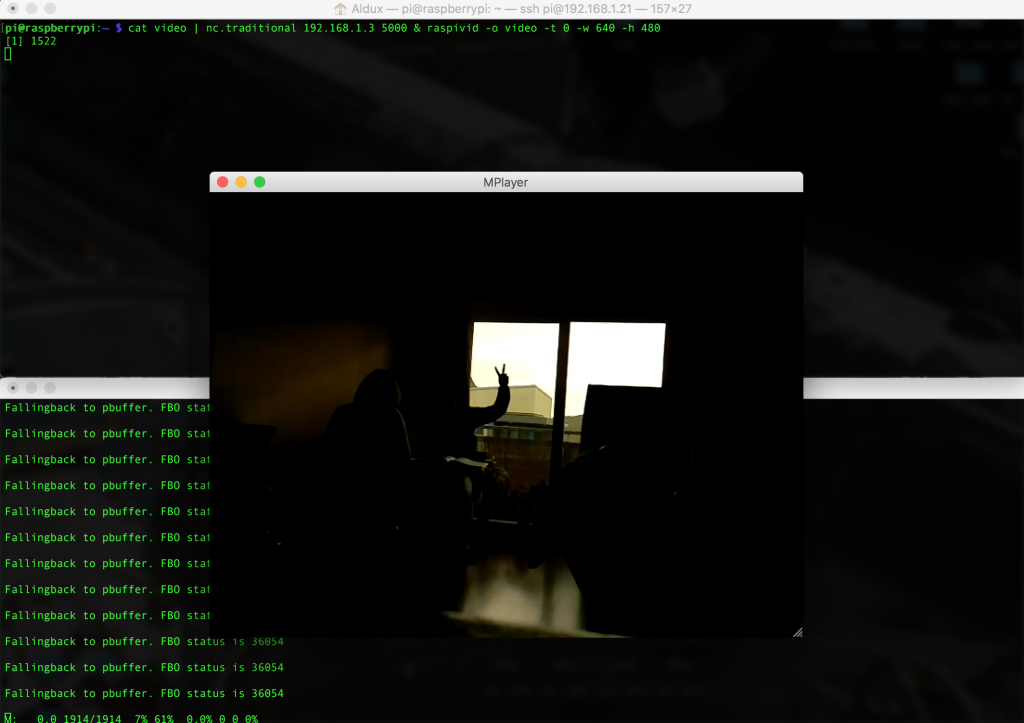

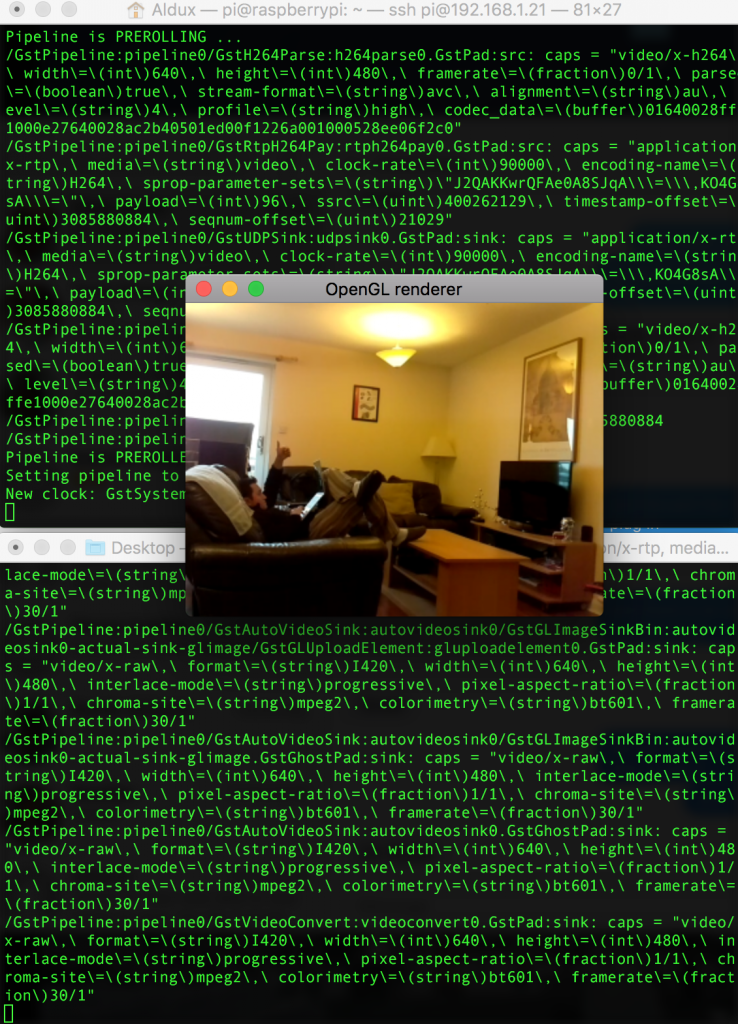

On the ground side as I mention before, I had the Ubiquiti AP and my laptop connected with ethernet to it. My computer logged in to the RPI via SSH in order to activate the scripts that run the main stuff. Also I had QgroundControl opened to be able to see the telemetry of the vehicle in a nice way. I was using mavproxy with udp casts. An image of how my computer screen looked was:

In the image above you can see the position teleoperation tool from the AltaX ground station program. This module changed the position of the robot by reading the keyboard from the ground station computer, pretty neat…

On the computer vision part, I added blue tape to the top of the roomba in order to be very easy distinguishable from the environment. I also tuned as much as possible my different color tracker algorithms, you can find the code here and a video demonstrating here.

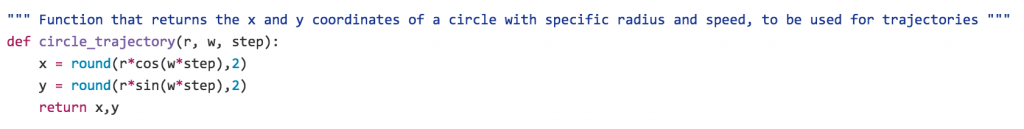

When you combine all ingredients, plus creating a velocity vector position control, you got a nice result, like the one showed in this video:

[exif id=”1514″]